Sensory XR May Be All in Your Head

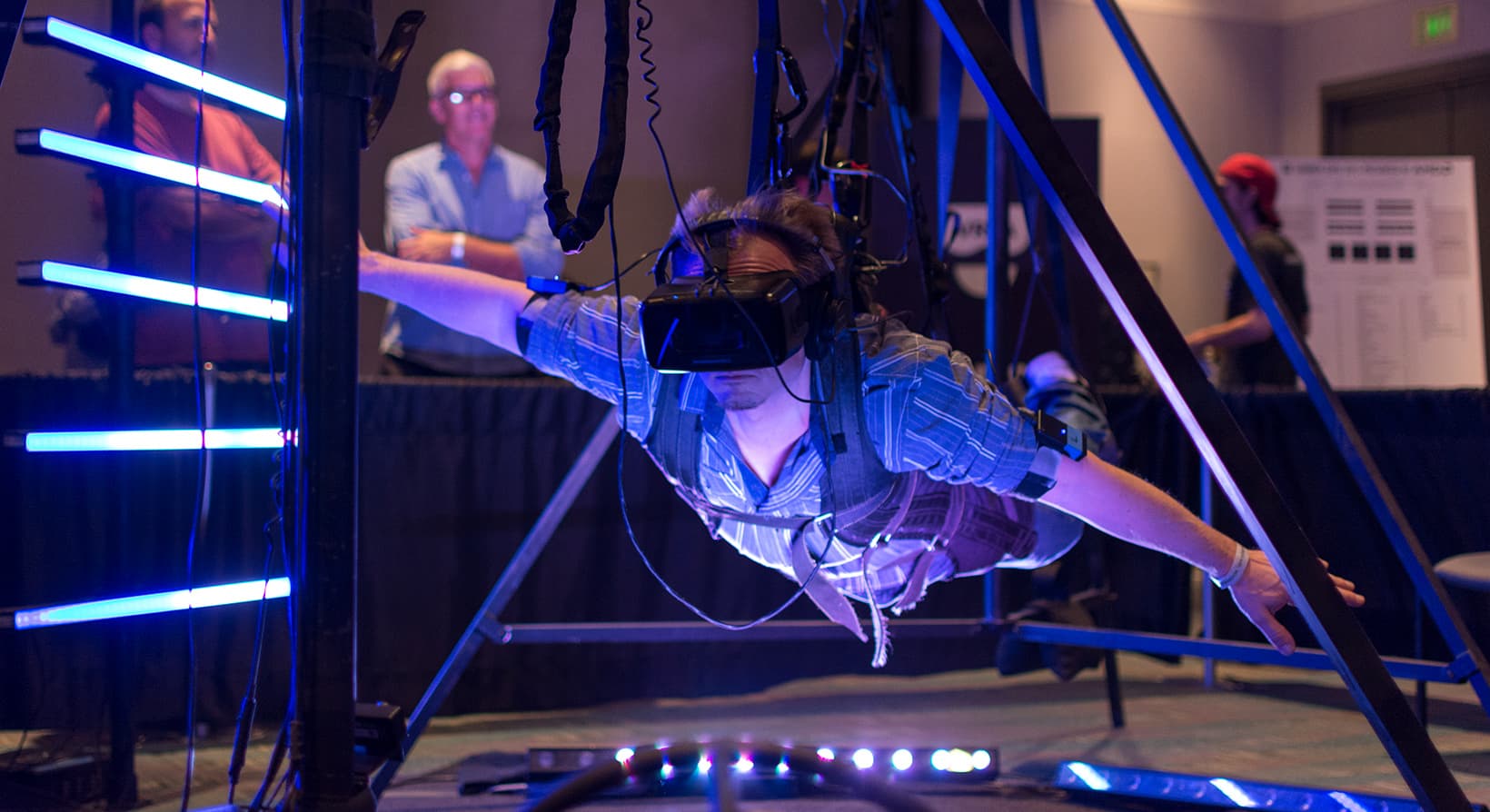

Graphics and screens have taken a giant leap forward in the Extended Reality world over the last few years. Innovators have worked tirelessly to bring stereoscopic imagery from novelty applications into powerful technology with applications across many industries. While visuals still have room to improve before they trick the human eye perfectly, it seems achievable very soon.

But visuals are not enough.

Haptic, auditory, olfactory, thermal, and atmospheric inputs are the key to really tricking our brains into placing us in that digital land. So more dedicated disruptors are focused on creation of XR accessories. And the quality of those extra senses is catching up to visuals – because no experience will ever achieve true suspension of disbelief without the sensory data our brains require to properly convince us we’re in another world.

It’s even harder than it sounds.

If we’re talking about completely effective immersion, absolutely indistinguishable from real life, we mean control over everything the user does – or does not – experience.

Are we by the ocean? The user needs to feel the wind, hear the surf, and smell the salt mingling with kelp and other sea life. Are we in the desert? The user needs warm air and the sensation of walking across loose sand.

At this point, you can see how monumental the challenge is, right?

We haven’t gotten to the point where the XR tech also keeps out the real world input which is likely to conflict with the immersive experience. If you’re detecting the scent of an exotic botanical garden in a virtual world, yet someone in the room is eating a spicy stir-fry, the inconsistency might be enough to crack the experience’s effectiveness. Likewise a dogsled tour of the arctic will only feel authentic with real icy air on exposed skin.

The equipment and environment a user will need for this level of immersion will be difficult to replicate and maintain across millions of users.

Or is there another path inward? A bypass around expensive and cumbersome hardware?

Brain-computer interfaces (BCIs) are also being developed, and this seemingly sci-fi technology might provide solutions when hardware falls short.

BCI has been explored initially as a method of capturing input from users, allowing them to operate computers and other machines with nothing more than a thought. This tech could be used for anything from operating a prosthetic limb to giving simple commands to a personal media device.

Both implant-based and non-invasive topical methods are being studied and we are likely to see a variety of products come to fruition to meet an array of needs, from medical to entertainment, and everything in between.

Pioneers like Neurable are developing real gear that can literally read your mind. They have partnered with leading VR brands like HTC and Microsoft. Neurable currently offers a modified Vive available to developers on a limited basis. And they may soon offer a modified Hololens as well.

It’s important to remember that Neurable’s current focus is on user command input and visual device output. Will they and other BCI developers expand to sensory interaction? We think the potential is there.

As the combination of neurotechnology with immersive experiences continue to intertwine, we could see everything from incredibly lifelike VR to artificial telepathy. It sounds wild, trust us, we know! But we’re moving into a world in which magic will be real and at all our fingertips.

Stambol disruptors live to talk tech – and we love to help businesses envision a future with XR. Ask us how immersive technology can help you, both today, and tomorrow.

Image Credit: metamorworks / Adobe Stock